The hum of traditional air conditioning systems is giving way to the gentle flow of coolant through pipes as data centers worldwide make a fundamental shift in how they keep their hardware from overheating. With artificial intelligence workloads generating unprecedented amounts of heat, facility operators are discovering that the cooling methods that worked for decades are no longer sufficient for today’s computational demands.

Major cloud providers including Microsoft, Google, and Meta have already begun deploying liquid cooling systems across their facilities. The transition represents more than just a technical upgrade – it’s becoming essential for supporting the massive GPU clusters required for training large language models and running AI inference at scale.

The Heat Problem That Air Cannot Solve

Modern AI processors generate significantly more heat per square inch than traditional server hardware. NVIDIA’s latest H100 GPUs, commonly used for AI training, can consume up to 700 watts each, while older server processors typically maxed out around 200 watts. When dozens of these chips are packed into dense configurations for AI workloads, the heat output quickly overwhelms conventional air cooling systems.

Traditional data center cooling relies on raised floors that distribute cold air upward through perforated tiles. This approach worked well when server racks consumed 5-10 kilowatts of power, but today’s AI-optimized racks can draw 40-80 kilowatts or more. The volume of cold air required to maintain safe operating temperatures becomes impractical, creating hot spots and inefficient cooling patterns.

Intel’s analysis shows that air cooling becomes fundamentally limited at around 250 watts per processor. Beyond this threshold, even the most sophisticated air circulation systems struggle to maintain the precise temperature control required for optimal performance. The physics are straightforward: air has limited heat capacity compared to liquid coolants, making it inadequate for high-density computing loads.

Liquid Cooling Technologies Gaining Traction

Data center operators are implementing several types of liquid cooling, each suited to different deployment scenarios. Direct-to-chip cooling uses cold plates mounted directly on processors, circulating coolant through small channels to absorb heat at the source. This method can handle the highest heat densities but requires modifications to server designs.

Immersion cooling takes a more radical approach by submerging entire servers in dielectric fluid that doesn’t conduct electricity. Companies like GRC and Submer have developed systems where servers operate completely underwater in specialized tanks. The fluid absorbs heat directly from all components, eliminating hot spots and reducing the need for internal fans.

Rear-door heat exchangers offer a middle ground by attaching liquid cooling units to the back of standard server racks. These systems use existing server designs while providing liquid cooling capacity. Major manufacturers including IBM and Lenovo now offer rear-door cooling as standard options for high-performance computing configurations.

Energy Efficiency and Cost Considerations

The energy savings from liquid cooling are substantial. Traditional data center cooling can account for 30-40% of total facility power consumption, while liquid cooling systems typically reduce this to 10-15%. The improved efficiency comes from the superior heat transfer properties of liquids and the ability to use higher operating temperatures.

Liquid cooling systems can operate effectively with coolant temperatures of 45-50 degrees Celsius, compared to the 20-25 degree air temperatures required for traditional cooling. This higher temperature differential allows data centers to use outside air for cooling during more hours of the year, reducing mechanical cooling requirements.

The cost analysis becomes favorable when considering total cost of ownership. While liquid cooling systems have higher upfront installation costs, the ongoing energy savings and increased computing density often justify the investment. Data centers can pack more computing power into the same footprint, improving revenue per square foot while reducing operational expenses.

Several hyperscale operators report power usage effectiveness (PUE) improvements from 1.4-1.6 with traditional cooling to 1.1-1.2 with liquid cooling systems. These efficiency gains translate directly to reduced carbon emissions and operational costs, making liquid cooling attractive from both environmental and financial perspectives.

Implementation Challenges and Solutions

The transition to liquid cooling isn’t without obstacles. Legacy data center infrastructure wasn’t designed for liquid distribution systems, requiring significant modifications to accommodate coolant loops, pumps, and heat exchangers. Facility operators must also develop new maintenance procedures and staff training programs.

Leak prevention represents a critical concern, though modern liquid cooling systems use non-conductive coolants that won’t damage electronics if spills occur. Advanced monitoring systems track coolant pressure, temperature, and flow rates continuously, alerting operators to potential issues before they become problems.

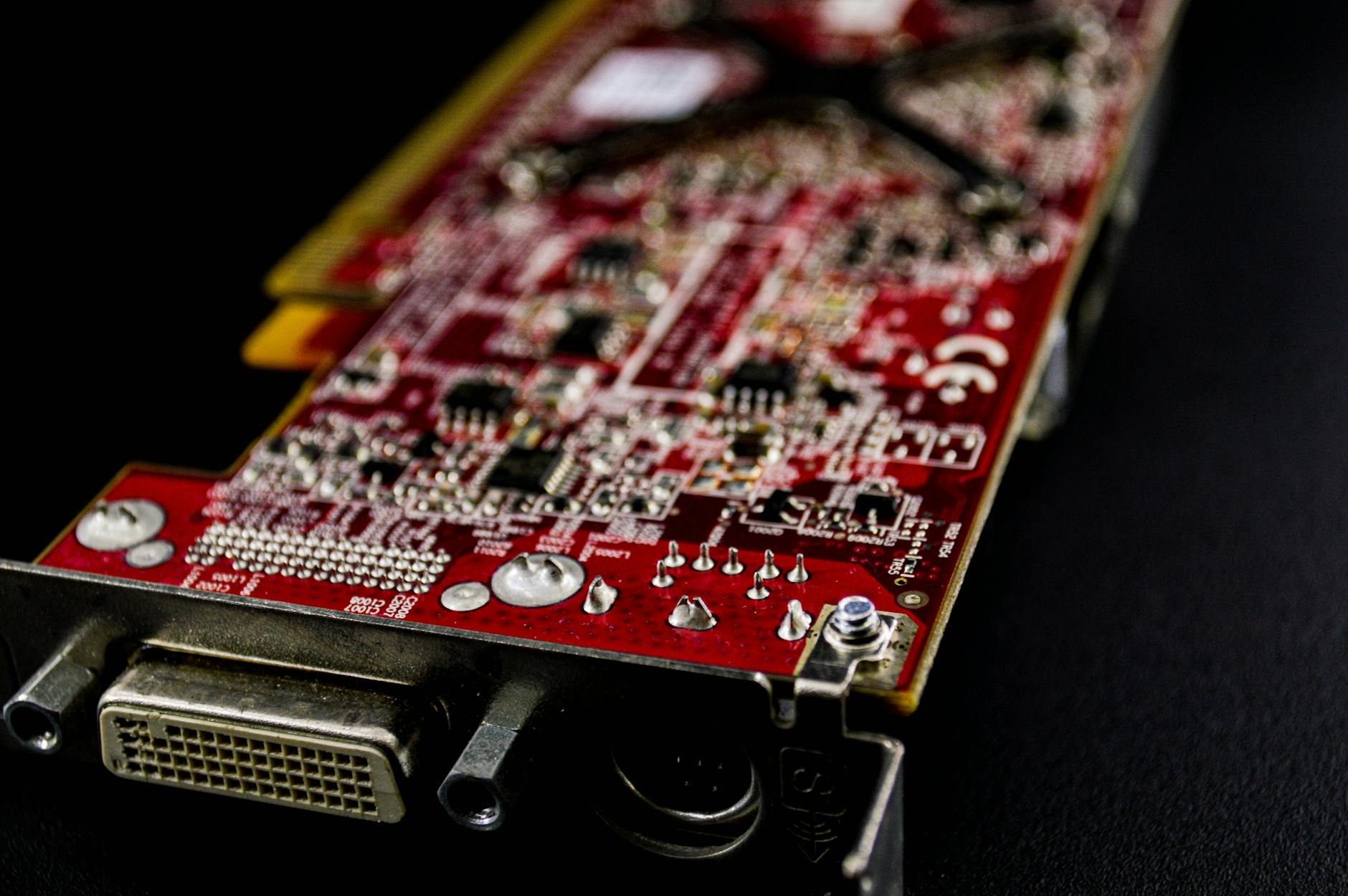

Supply chain considerations are becoming important as demand for liquid cooling components increases. Manufacturers are scaling production of cold plates, pumps, and specialized coolants to meet growing demand. The semiconductor industry’s experience with graphics card manufacturing challenges has highlighted the importance of building resilient supply chains for critical cooling components.

Standardization efforts are underway to establish common interfaces and specifications for liquid cooling systems. Organizations like the Open Compute Project are developing reference designs that allow different vendors’ cooling components to work together, reducing integration complexity for data center operators.

The Future of Data Center Cooling

The shift toward liquid cooling aligns with broader trends in data center efficiency and sustainability. As AI workloads continue growing and processors become more powerful, the heat management challenge will only intensify. Liquid cooling provides a scalable solution that can adapt to future hardware generations without fundamental infrastructure changes.

Emerging applications like quantum computing and advanced AI training will likely require even more sophisticated cooling approaches. Some research facilities are already experimenting with cryogenic cooling for quantum processors, while others explore using waste heat from data centers for district heating systems.

The transformation happening in data centers mirrors the evolution seen in other computing areas, such as the recent developments in energy-efficient processor design. As the industry prioritizes both performance and sustainability, liquid cooling represents a practical solution that addresses immediate needs while providing a foundation for future growth in AI and high-performance computing applications.

Frequently Asked Questions

Why can’t traditional air cooling handle AI workloads?

AI processors like NVIDIA’s H100 GPUs generate 700+ watts each, far exceeding the 250-watt limit where air cooling becomes ineffective.

What are the main types of liquid cooling for data centers?

Direct-to-chip cooling, immersion cooling, and rear-door heat exchangers, each suited for different deployment scenarios and heat densities.