Alexa now knows when you’re stressed. Google Assistant can detect when you’re frustrated. Amazon’s latest Echo devices analyze vocal patterns to identify emotional states, marking a significant shift in how we interact with voice technology.

Major tech companies are integrating emotional intelligence capabilities into their voice assistants, moving beyond simple command recognition to understanding the emotional context of user requests. This evolution represents the next frontier in human-computer interaction, where devices don’t just hear what we say but comprehend how we feel when we say it.

The Science Behind Emotional Voice Recognition

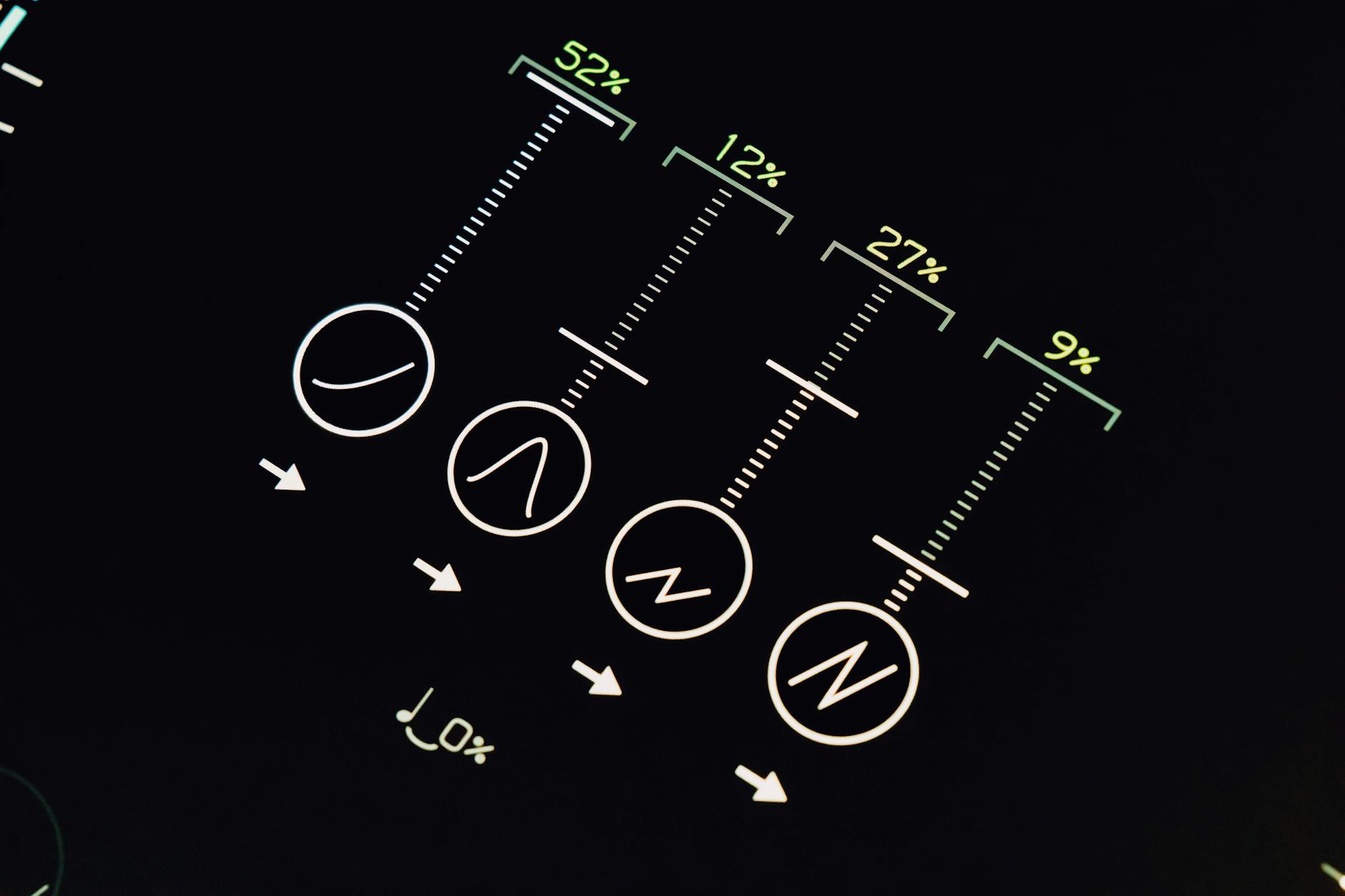

Voice assistants now analyze multiple vocal indicators to determine emotional states. Pitch variations, speaking pace, vocal tremor, and pause patterns all contribute to emotional assessment algorithms. When someone speaks quickly with a higher pitch, the system might interpret stress or excitement. Slower speech with longer pauses could indicate sadness or fatigue.

Amazon’s Echo devices use machine learning models trained on thousands of hours of emotional speech data. These models identify subtle changes in vocal characteristics that human ears might miss. Google Assistant employs similar technology, analyzing not just individual words but the emotional undertones of entire conversations.

The technology builds on decades of research in affective computing, where scientists study how machines can recognize and respond to human emotions. Voice analysis offers advantages over other methods because it’s passive – users don’t need to wear sensors or input their emotional state manually.

Real-World Applications and User Benefits

Smart home integration showcases the practical value of emotionally aware voice assistants. When Alexa detects stress in your voice while you’re asking about your schedule, it might automatically dim the lights and play calming music. If you sound rushed while asking for directions, it could prioritize the fastest route options.

Healthcare applications show particular promise. Voice assistants can monitor elderly users for signs of depression or cognitive decline by tracking changes in speech patterns over time. Caregivers receive alerts when vocal indicators suggest their loved ones might need additional support.

Customer service scenarios benefit significantly from emotional recognition. When users express frustration, voice assistants can escalate to human representatives more quickly or offer additional troubleshooting options. Companies report higher satisfaction rates when their voice systems respond appropriately to user emotions.

Mental health monitoring represents another emerging application. Some voice assistants can detect early warning signs of anxiety or depression episodes, potentially alerting healthcare providers or suggesting coping strategies. While not replacing professional care, this technology offers valuable supplementary monitoring.

Privacy Concerns and Technical Limitations

Emotional voice recognition raises significant privacy questions. These systems require continuous audio analysis, creating detailed emotional profiles of users. Companies must balance functionality with privacy protection, ensuring emotional data doesn’t become a surveillance tool.

Most manufacturers address these concerns through local processing, where emotional analysis happens on the device rather than in the cloud. Apple’s Siri processes emotional cues locally on iPhones and HomePods, preventing sensitive emotional data from leaving the device. Google offers similar local processing options for Nest devices.

Technical challenges persist despite rapid advancement. Cultural differences in emotional expression can confuse recognition systems trained primarily on one demographic. Accents, languages, and regional speaking patterns affect accuracy. Background noise, multiple speakers, and poor audio quality also impact performance.

False positives create another concern. Systems might misinterpret excitement as stress or interpret normal variation in speaking patterns as emotional distress. Manufacturers continue refining their algorithms to reduce these errors while improving overall accuracy.

Integration with Smart Home Ecosystems

Voice assistants with emotional intelligence are transforming smart home experiences. When integrated with other connected devices, they create more responsive living environments. Thread protocol standards enable faster communication between emotionally aware voice assistants and other smart devices.

Lighting systems adjust automatically based on detected moods. Thermostats modify temperatures when stress indicators suggest users might benefit from environmental changes. Entertainment systems curate content matching emotional states – upbeat music when happiness is detected, relaxing content during stressful periods.

Kitchen appliances respond to emotional cues during meal preparation. If voice assistants detect frustration while someone cooks, they might suggest simpler recipes or provide additional cooking tips. Smart speakers can adjust their response style based on the user’s emotional state, offering more detailed instructions when stress is detected.

The technology works alongside other advancing smart home features. Real-time language translation in wireless earbuds could eventually incorporate emotional context, providing more nuanced cross-cultural communication.

The Future of Emotionally Intelligent Voice Technology

Major manufacturers are expanding emotional recognition capabilities across their entire product lines. Amazon plans to integrate these features into more Echo devices and Fire TV products. Google is developing more sophisticated emotional analysis for Nest speakers and Android devices. Apple continues refining Siri’s emotional awareness across iPhones, iPads, and HomePods.

Healthcare partnerships are accelerating development. Voice assistant makers are collaborating with medical institutions to create specialized applications for patient monitoring and therapeutic support. These partnerships could lead to voice assistants that detect early signs of various health conditions through speech analysis.

The technology will likely become more personalized over time. Instead of using generic emotional models, future systems might learn individual users’ unique emotional expressions. This personalization could dramatically improve accuracy while providing more tailored responses.

Integration with other emerging technologies promises even more sophisticated applications. Combining emotional voice recognition with computer vision and biometric sensors could create comprehensive emotional awareness systems that respond to multiple indicators simultaneously.

As emotional intelligence becomes standard in voice assistants, users will expect more empathetic and contextually appropriate interactions. This evolution represents a fundamental shift from command-based interfaces to genuinely conversational AI companions that understand not just what we want, but how we feel about getting it.

Frequently Asked Questions

How do voice assistants detect emotions?

They analyze vocal patterns like pitch, pace, and pauses using machine learning algorithms trained on emotional speech data.

Are emotional voice recognition features private?

Most manufacturers process emotional data locally on devices rather than sending it to cloud servers for privacy protection.